What’s your vote really worth ?

When the time comes for some major political elections, there's always a big push to "get out and vote". A big deal is made about how every vote counts, and how if you don't participate, someone else will vote for you, meaning that the vote from others will have relatively more power. For example, in a pool of 1 million voters, if you don't cast your ballot, each voter will have a stronger impact, albeit by a mere 1 millionth of a vote.

Voting is pushed as empowering and individualistic. In reality it's a completely statistical issue. Your own vote by itself is irrelevant in the context of millions of other votes. From a purely individual point of view, you may or may not cast your ballot, and the outcome of the election will be exactly the same for all intents and purposes. No butterfly effect applies here.

To make a pop-science analogy, this can be seen as some sort of poor-man's quantum superposition. The voting booth can be considered as a closed system, where anything that happens there has no effect on the outcome of the election. The "you" that votes A, B, C or abstains, can coexist with no practical effect to the outside, all because of the extreme dilution of a single vote.

There is some value however in the intent to cast a vote in a certain way, and that is to apply self-reflection to have an estimate of the outcome of the election, at least as far as your own demographic goes. That in itself is not a greatly applicable knowledge, being in itself only a guess of a portion of the voting population, but it's more consequential than actually casting a ballot.

To me this is interesting as it highlights the duality between perceived direct decisional power and actually usable power. We're sold the idea that we have a say on things as active participants, while in reality the only true power resides in the ability to self-reflect and predict the direction of a herd, but even that is not something that is directly applicable in most cases (maybe guess how the markets will react the next day ?). The greater power is that of introspection. The act itself of realizing and reasoning over the actual practical effect, or lack thereof, of voting, is further knowledge into understanding the place of the self amidst a collective.

If the power structures were honest, they would plainly state that civic duty is not about casting a ballot, but it's actually to behave like a statistical sample. It would not be a good civic duty to behave as an observer of yourself and to act out of left field, driven by some sort of superego. It's a good civic duty instead to act predictably, as it makes things easier for the manager class in the governmental institutions, as well as for the private ones.

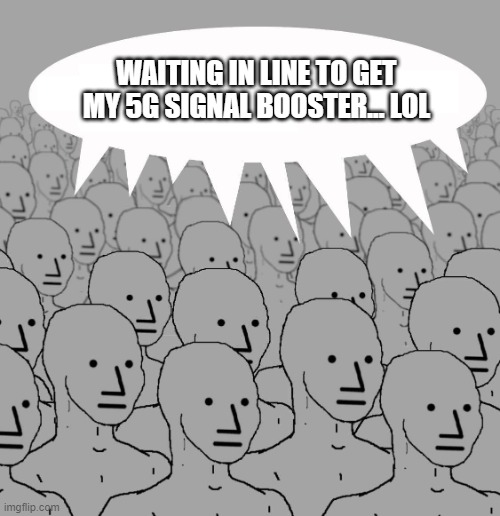

In practice, one could still go and vote, but at least, as a thought experiment, it's worth considering why the actual practice of casting a ballot is not an act of free-will, rather it's a statistical sampling of a collective mind that is an amalgam of popular culture shaped by mass media.

From a purely practical perspective, being a conformist and toning down critical thinking is necessary to make things work in a collective. There's no doubt that humanity gets in the way of productivity. As technology improves, we employ more and more robots to help us with labor, but robots aren't necessarily mechanical. Historically, the majority of humans have been employed to work in a very organized fashion, something that requires limited critical thinking and limited creativity.

From a purely practical perspective, being a conformist and toning down critical thinking is necessary to make things work in a collective. There's no doubt that humanity gets in the way of productivity. As technology improves, we employ more and more robots to help us with labor, but robots aren't necessarily mechanical. Historically, the majority of humans have been employed to work in a very organized fashion, something that requires limited critical thinking and limited creativity.