Quantization and resolution of data are two key concepts in computer science that are at the root of the digital revolution. I think that even a cursory understanding of those concepts can be useful towards building a mental framework in trying to seek objectivity.

Quantization

For my generation, the term digital was popularized with Compact Discs. CDs look metallic and shiny and are read by lasers. They fit very well the ideal of something new and futuristic.

Lasers aren’t however what makes CDs so much a digital support. CDs are digital because they store information that is quantized. The perfection in reproduction of a CD is due to the fact that there is a a-priori determination of what the data in a sound sample should be like.

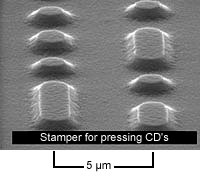

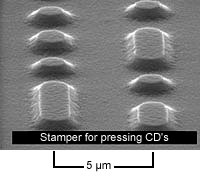

Quantization is a process necessary to encode data for digital storage. It sets the boundaries for a relation between a physical microscopic deformation of a lump of atoms on the CD and a number that it represents.

Bits on a CD.

Quantization is also wasteful, because precision is only achieved by an abundance of physical space to encode each piece of data. Such space is used to avoid ambiguities that could arise from subsequent deformities of the support. Notice that significant deformation would lead to errors in the data, this brings the need for an encoding format that can deal with errors, but we won't bother with that here.

Quantization can be seen as a signed contract between the writer and the future readers that spells out the exact data format and the amounts of bits of data that were used to digitize the input signal, be that a sound wave, an image or anything else.

This is a fundamental perspective as one is trying to determine what's truthful and correct in the generic sense. Of course, truth as it’s applied to everyday life is infinitely more complex than the playback of a sound track, but this is a valid concept nonetheless. Even if it objective truth can’t be achieved, it sets a point of reference that can be kept in mind as one strives for objectivity.

It should be noted that legal text also tends to assume a format that is somewhat objective. This is again done by a certain structure as well as an abundance of information. Legal text is however more objective as it pertains to the structure of the content, but not on the actual claims. In fact, in modern law, truth is to be found in the middle of two clearly partisan perspectives.

Resolution

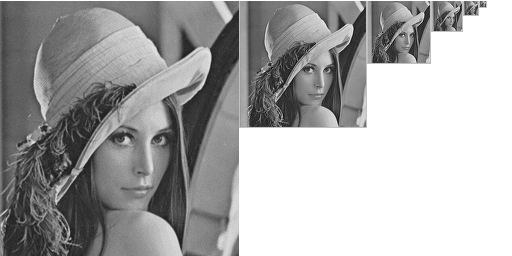

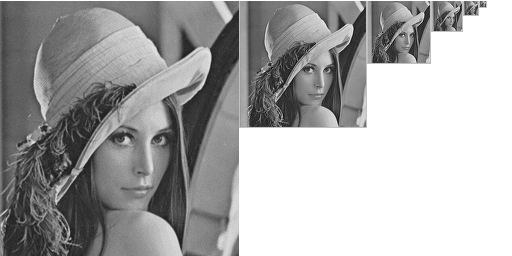

Digital storage relies on physical allocation which is finite and this brings the issue of resolution. Each piece of information is stored, retrieved and processed at some level of resolution, or granularity. It's a concept that it's easier to imagine with an image, where the number of pixels determines its spatial resolution, and where a higher resolution image can reveal more details that may be invisible at lower resolutions.

Same image at multiple resolutions.

The same point of view can be applied to any problem. One may say that his home is being invaded by scary monsters, and he would be right as long as one is looking at the carpet with an electron microscope, spotting countless dust mites.

Scary monsters in your house.

This of course it’s an extreme case, but it’s illustrative of how statements can be true or false depending on the resolution at which one is operating.

We intuitively know that taking a broader perspective on things, instead of focusing on details, is one way to avoid to worry needlessly. The suggestion to “take a step back” or “look at it from the outside” can be thought of as a suggestion to lower the resolution on a problem to avoid getting entangled in the noise.

Day traders also know the dangers of zooming into a bar chart to look at just a few hours of 1-minute candles and feeling like the market is constantly on the verge of exploding up or going for a colossal dump. A quick zoom-out instead shows a much more stable and static price chart, one that is more comfortable and also more productive to operate at.

Objectivity is a concept and it’s therefore made up. To carve a space in which one can argue in an objective manner it’s important to determine a resolution and to stick to it for as long as possible. A debate can get very confusing if people involved decide to argue at different resolutions.

This shifting of resolution often happens, sometimes to highlight the importance of a certain level of detail, but many times simply to find a level, a resolution, at which one’s own argument is still valid. Needless to say, that that’s not a profitable way to come to an objective conclusion.